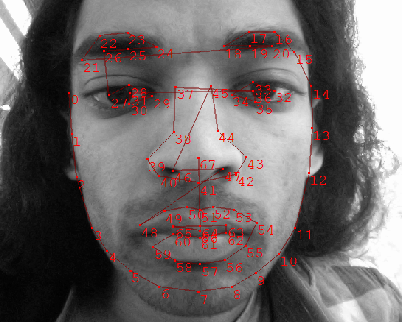

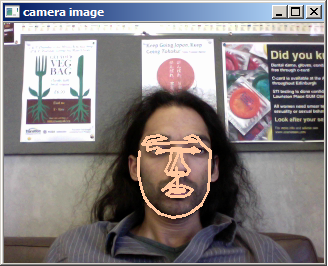

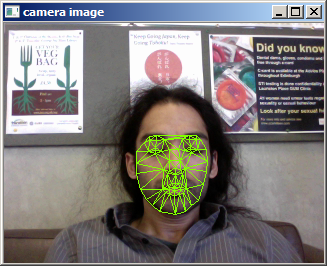

I’ve been working on developing a method for automatic head-pose tracking, and along the way have come to model facial appearances. I start by initializing a facial bounding box using the Viola-Jones detector, a well known and robust detector used for training objects. This allows me to centralize the face. Once I know where the 2D plane of the face is in an image, I can register an Active Shape Model like so:

After multiple views of the possible appearance variations of my face, including slight rotations, I construct an appearance model.

The idea I am working with is using the first components of variations of this appearance model for determining pose. Here I show the first two basis vectors and the images they reconstruct:

![]()

![]()

As you may notice, these two basis vectors very neatly encode rotation. By looking at the eigenvalues of the model, you can also interpret pose.

You are reading

Comments for this entry

Please post code

Disappears in my browser (Firefox 20.0, Mac OSX 10.8) promptly upon display.

Labels do not appear on comment fields.