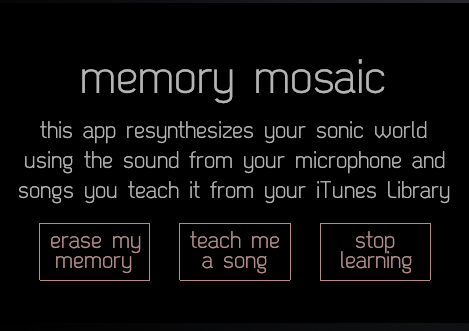

I had a chance to incorporate some udpates into Memory Mosaic, an iOS app I started developing during my PhD in Audiovisual Scene Synthesis. The app organizes sound in real-time and clusters them based on similarity. Using the microphone on the device, or an iTunes song, any onset triggers a new audio segment to be created and stored in a database. The example video below shows how this works for Do Make Say Think’s song, Minim and The Landlord is Dead:

Memory Mosaic iOS from Parag K Mital on Vimeo.

Here’s an example of using it with live instruments

Memory Mosaic – Technical Demo (iOS App) from Parag K Mital on Vimeo.

The app also works with AudioBus, meaning you can use it with other apps too, adding effects chains, or sampling from another app’s output. Available on the iOS App Store: https://itunes.apple.com/us/app/memory-mosaic/id475759669?mt=8… Continue reading...