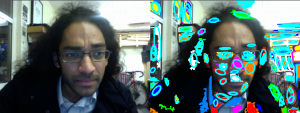

Working closely with my adviser Mick Grierson, I have developed a way to resynthesize existing videos using material from another set of videos. This process starts by learning a database of objects that appear in the set of videos to synthesize from. The target video to resynthesize is then broken into objects in a similar manner, but also matched to objects in the database. What you get is a resynthesis of the video that appears as beautiful disorder. Here are two examples, the first using Family Guy to resynthesize The Simpsons. And the second using Jan Svankmajer’s Food to resynthesize Jan Svankmajer’s Dimensions of Dialogue.

Archived entries for computer vision

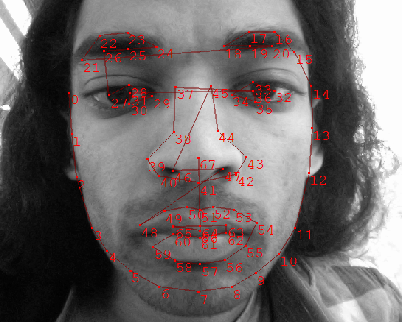

Facial Appearance Modeling/Tracking

I’ve been working on developing a method for automatic head-pose tracking, and along the way have come to model facial appearances. I start by initializing a facial bounding box using the Viola-Jones detector, a well known and robust detector used for training objects. This allows me to centralize the face. Once I know where the 2D plane of the face is in an image, I can register an Active Shape Model like so:

After multiple views of the possible appearance variations of my face, including slight rotations, I construct an appearance model.

The idea I am working with is using the first components of variations of this appearance model for determining pose. Here I show the first two basis vectors and the images they reconstruct:

![]()

![]()

As you may notice, these two basis vectors very neatly encode rotation. By looking at the eigenvalues of the model, you can also interpret pose.… Continue reading...

Responsive Ecologies Documentation

As part of a system of numerous dynamic connections and networks, we are reactive and deterministic to a complex system of cause and effect. The consequence of our actions upon our selves, the society we live in and the broader natural world is conditioned by how we perceive our involvement. The awareness of how we have impacted on a situation is often realised and processed subconsciously, the extent and scope of these actions can be far beyond our knowledge, our consideration, and importantly beyond our sensory reception. With this in mind, how can we associate our actions, many of which may be overlooked as customary, with for instance, the honey bee depopulation syndrome or the declining numbers of Siberian Tigers.

Responsive Ecologies is part of an ongoing collaboration with ZSL London Zoo and Musion Academy. Collectively we have been exploring innovative means of public engagement, to generate an awareness and understanding of nature and the effects of climate change. All of the contained footage has come from filming sessions within the Zoological Society; this coincidentally has raised some interesting questions on the spectacle of captivity, a issue which we have tried to reflect upon in the construction and presentation of … Continue reading...

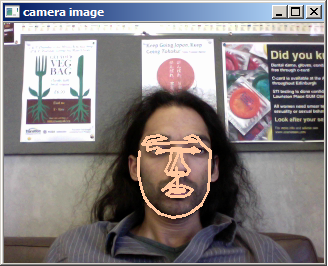

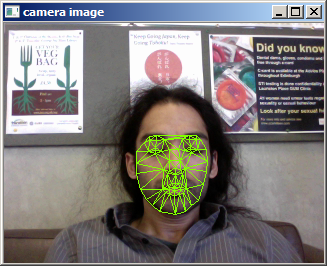

6DOF Head Tracking

The following demo works with SeeingMachines FaceAPI in openFrameworks controlling a Mario avatar. It also has some really poor gesture recognition (and learning but it’s not shown here), though a threshold on the rotation DOF would have produced better results for the simple task of looking up/down left/right gestures.

6DOF Head Tracking from pkmital on Vimeo.

interfacing seeingmachines faceapi with openFrameworks to control a 3D mario avatar

This is just with the non-commercial license. The full commercial license (~$3000?) gives you access to lip/mouth tracking and eye-brows, as well as much more flexibility in how to use their api with different/multiple cameras and accessing image data.

Of course, there are other initiatives at producing similar results. Mutual information based template trackers, for instance, seem to be state-of-art. Take a look at recent work by Panin and Knoll using OpenTL:

I imagine a lot of people would like this technology.… Continue reading...

Keyframe based modeling

Playing with MSERs in trying to implement an algorithm for feature-based object tracking. The algorithm first finds MSERs, warps them to circles, describes them with a SIFT descriptor, and then indexes keyframes of sift vectors by using vocabulary trees. Of course that’s a ridiculously simplified explanation, but look at what it’s capable of!!!:

Dynamic Scene Perception Eye-Movement Data Videos and Analysis

Over the past 2 years, I have been working under the direction of Prof John M Henderson together with Dr Tim J Smith and Dr Robin Hill on the DIEM project (Dynamic Images and Eye-Movements). Our project has focused on investigating active visual cognition by eye-tracking numerous participants watching a wide-variety of short videos.

We are in the process of making all of our data freely available for research use. As well, we have also worked on tools for analyzing eye-movements during such dynamic scenes.

CARPE, or more bombastically known as Computational Algorithmic Representation and Processing of Eye-movements, allows one to begin visualizing eye-movement data together with the video data it was tracked with in a number of ways. It currently supports low-level feature visualizations, clustering of eye-movements, model selection, heat-map visualizations, blending, contour visualizations, peek-through visualizations, movie output, binocular data input, and more. The videos shown above on our Vimeo page were all created using this tool. Head over to Google code to check out the source code or download the binary. We are still in the process of stream-lining this process by creating manuals for new users and uploading more of the eye-tracking and video data so … Continue reading...

Augmented Sculpture Project

This will be my second year supervising the Digital Media Studio Project at the University of Edinburgh. The course is a mix of over 60 Digital Composition, Sound Design, Digital Design in Media, and Acoustic and Music Technology MSc students. 10-15 supervisors pitch a project proposal and the students decide which ones they’d like to participate in. This year, I proposed Augmented Sculpture, and 3 students signed up of which 2 are Sound Designer and 1 is a Digital Designer. So far, they have managed to communicate tracking data via a reactivision framework and combine life-sized sculpture to interact with a sonic environment built in Max/MSP.

Chandan, Helen and Ev playing with a ReacTIVision controlled Max/MSP patch developed for the Digital Media Studio Project at Edinburgh University. This is the very first ever test run of the system, and it worked!

Follow more developments on their blog.… Continue reading...