Ever wonder what happens when you’ve been accused of violating copyright multiple times on YouTube? First, you get a redirect to YouTube’s “Copyright School” whenever you visit YouTube, forcing you to watch a cartoon of Happy Tree Friends where the main character is dressed as an actual pirate:

Second, I’m guessing, your account will be banned. Third, you cry and wonder why you ever violated copyright in the first place.

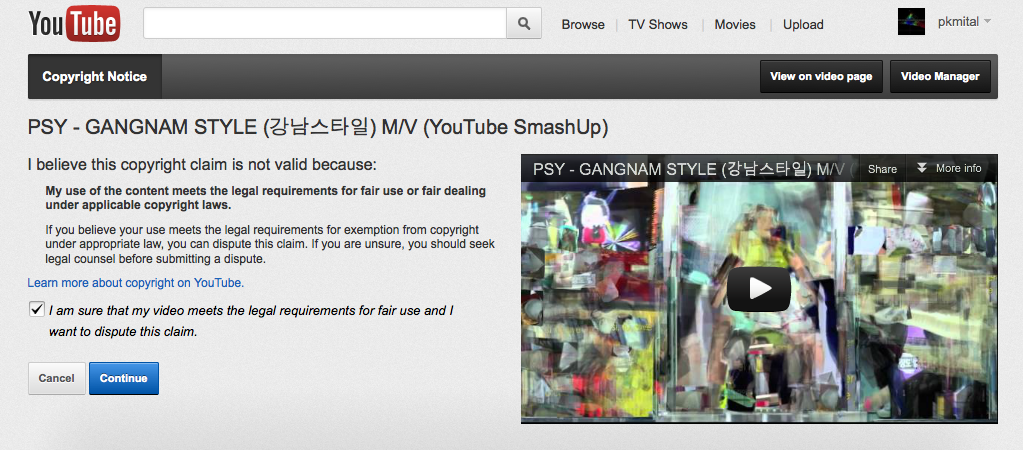

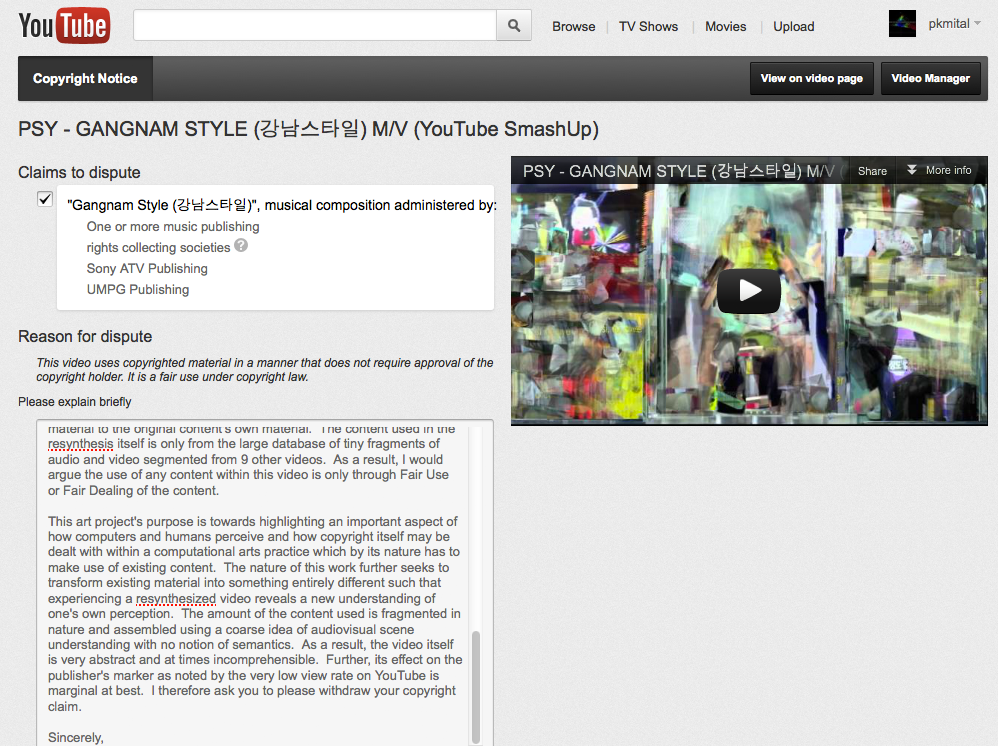

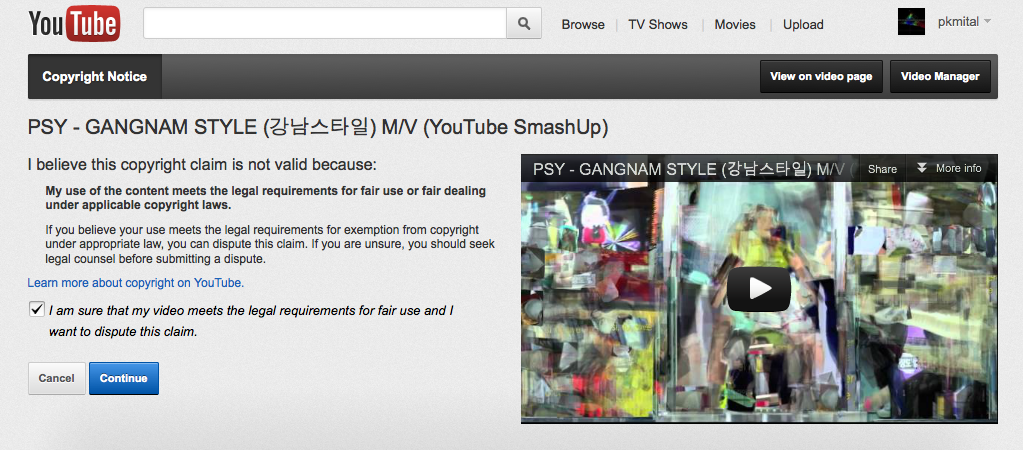

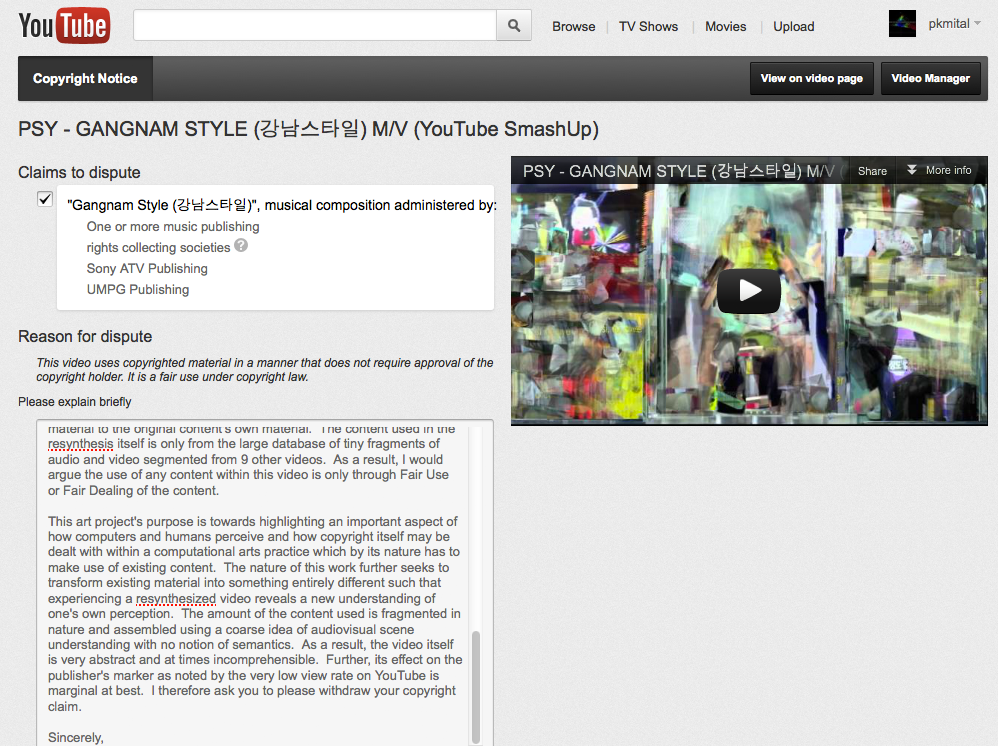

In my case, I’ve disputed every one of the 4 copyright violation notices that I’ve received under grounds of Fair Use and Fair Dealing. Here’s what happens when you file a dispute using YouTube’s online form (click for high-res):

3 of the 4 have been dropped after I’ve filed disputes, though I’m still waiting to hear about the response to the above dispute. Read the dispute letter to Sony ATV and UPMG Publishers in full here.

The picture above shows a few stills from what my Smash Ups look like. The process described in greater detail on createdigitalmotion.com is part of my ongoing research into how existing content can be transformed into artistic styles reminiscent of analytic cubist, figurative, and futurist paintings. The process to create the videos … Continue reading...